Businesses rely more than ever on data and AI to innovate, offer value to customers, and stay competitive. The adoption of machine learning (ML), created a need…

Businesses rely more than ever on data and AI to innovate, offer value to customers, and stay competitive. The adoption of machine learning (ML), created a need for tools, processes, and organizational principles to manage code, data, and models that work reliably, cost-effectively, and at scale. This is broadly known as machine learning operations (MLOps).

The world is venturing rapidly into a new generative AI era powered by foundation models and large language models (LLMs) in particular. The release of ChatGPT further accelerated this transition.

New and specialized areas of generative AI operations (GenAIOps) and large language model operations (LLMOps) emerged as an evolution of MLOps for addressing the challenges of developing and managing generative AI and LLM-powered apps in production.

In this post, we outline the generative AI app development journey, define the concepts of GenAIOps and LLMOps, and compare them with MLOps. We also explain why mastering operations becomes paramount for business leaders executing an enterprise-wide AI transformation.

Building modern generative AI apps for enterprises

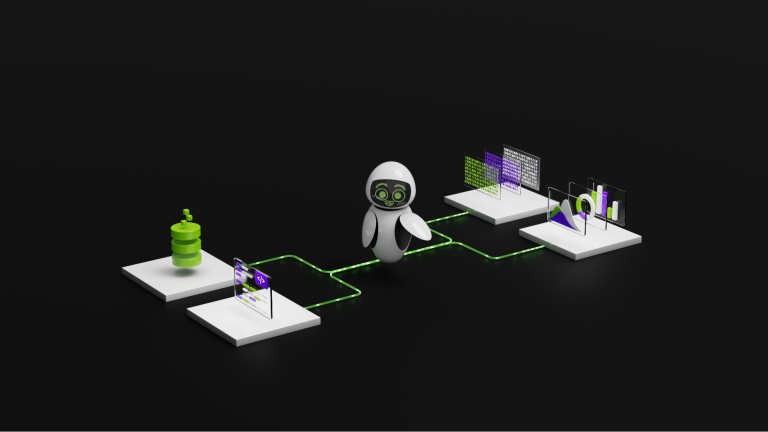

The journey towards a modern generative AI app starts from a foundation model, which goes through a pretraining stage to learn the foundational knowledge about the world and gain emergent capabilities. The next step is aligning the model with human preferences, behavior, and values using a curated dataset of human-generated prompts and responses. This gives the model precise instruction-following capabilities. Users can choose to train their own foundation model or use a pretrained model.

For example, various foundation models such as NVIDIA Nemotron-3 and community models like Llama are available through NVIDIA AI Foundations. These are all enhanced with NVIDIA proprietary algorithmic and system optimizations, security, and enterprise-grade support covered by NVIDIA AI Enterprise.

Next, comes the customization stage. A foundation model is combined with a task-specific prompt or fine-tuned on a curated enterprise dataset. The knowledge of a foundation model is limited to the pretraining and fine-tuning data, becoming outdated over time unless the model is continuously retrained, which can be costly.

A retrieval augmented generation (RAG) workflow is used to maintain freshness and keep the model grounded with external knowledge during query time. This is one of the most critical steps in the generative AI app development lifecycle and when a model learns unique relationships hidden in enterprise data.

After customization, the model is ready for real-world use either independently or as a part of a chain, which combines multiple foundation models and APIs to deliver the end-to-end application logic. At this point, it is crucial to test the complete AI system for accuracy, speed, and vulnerabilities, and add guardrails to ensure the model outputs are accurate, safe, and secure.

Finally, the feedback loop is closed. Users interact with an app through the user interface or collect data automatically using system instrumentation. This information can be used to update the model and the A/B test continuously, increasing its value to the customers.

An enterprise typically has many generative AI apps tailored to different use cases, business functions, and workflows. This AI portfolio requires continuous oversight and risk management to ensure smooth operation, ethical use, and prompt alerts for addressing incidents, biases, or regressions.

GenAIOps accelerates this journey from research to production through automation. It optimizes development and operational costs, improves the quality of models, adds robustness to the model evaluation process, and guarantees sustained operations at scale.

Understanding GenAIOps, LLMOps, and RAGOps

There are several terms associated with generative AI. We outline the definitions in the following section.

Think of AI as a series of nested layers. At the outermost layer, ML covers intelligent automation, where the logic of the program is not explicitly defined but learned from data. As we dive deeper, we encounter specialized AI types, like those built on LLMs or RAGs. Similarly, there are nested concepts enabling reproducibility, reuse, scalability, reliability, and efficiency.

Each one builds on the previous, adding, or refining capabilities–from foundational MLOps to the newly developed RAGOps lifecycle:

MLOps is the overarching concept covering the core tools, processes, and best practices for end-to-end machine learning system development and operations in production.

GenAIOps extends MLOps to develop and operationalize generative AI solutions. The distinct characteristic of GenAIOps is the management of and interaction with a foundation model.

LLMOps is a distinct type of GenAIOps focused specifically on developing and productionizing LLM-based solutions.

RAGOps is a subclass of LLMOps focusing on the delivery and operation of RAGs, which can also be considered the ultimate reference architecture for generative AI and LLMs, driving massive adoption.

GenAIOps and LLMOps span the entire AI lifecycle. This includes foundation model pretraining, model alignment through supervised fine-tuning, and reinforcement learning from human feedback (RLHF), customization to a specific use case coupled with pre/post-processing logic, chaining with other foundation models, APIs, and guardrails. RAGOps scope doesn’t include pretraining and assumes that a foundation model is provided as an input into the RAG lifecycle.

GenAIOps, LLMOps, and RAGOps are not only about tools or platform capabilities to enable AI development. They also cover methodologies for setting goals and KPIs, organizing teams, measuring progress, and continuously improving operational processes.

Extending MLOps for generative AI and LLMs

With the key concepts defined, we can focus on the nuances differentiating one from the other.

MLOps

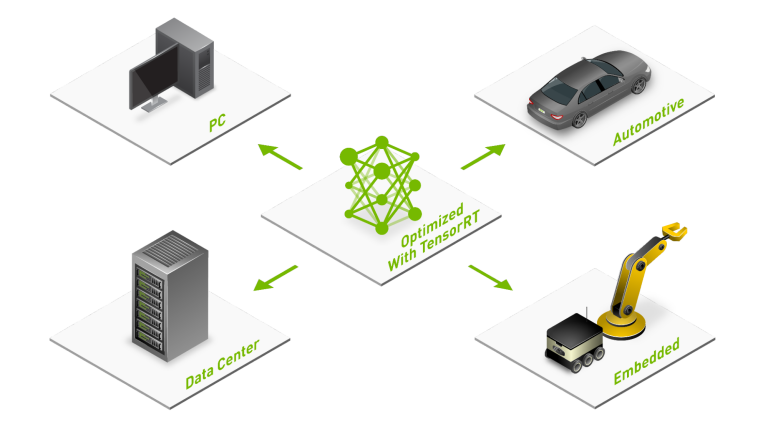

MLOps lays the foundation for a structured approach to the development, training, evaluation, optimization, deployment, inference, and monitoring of machine learning models in production.

The key MLOps ideas and capabilities are relevant for generative AI, including the following.

Infrastructure management: request, provision, and configure compute, storage, and networking resources to access the underlying hardware programmatically.

Data management: collect, ingest, store, process, and label data for training and evaluation. Configure role-based access control; dataset search, browsing, and exploration; data provenance tracking, data logging, dataset versioning, metadata indexing, data quality validation, dataset cards, and dashboards for data visualization.

Workflow and pipeline management: work with cloud resources or a local workstation; connect data preparation, model training, model evaluation, model optimization, and model deployment steps into an end-to-end automated and scalable workflow combining data and compute.

Model management: train, evaluate, and optimize models for production; store and version models along with their model cards in a centralized model registry; assess model risks, and ensure compliance with standards.

Experiment management and observability: track and compare different machine learning model experiments, including changes in training data, models, and hyperparameters. Automatically search the space of possible model architectures and hyperparameters for a given model architecture; analyze model performance during inference, monitor model inputs and outputs for concept drift.

Interactive development: manage development environments and integrate with external version control systems, desktop IDEs, and other standalone developer tools, making it easier for teams to prototype, launch jobs, and collaborate on projects.

GenAIOps

GenAIOps encompasses MLOps, code development operations (DevOps), data operations (DataOps), and model operations (ModelOps), for all generative AI workloads from language, to image, to multimodal. Data curation and model training, customization, evaluation, optimization, deployment, and risk management must be rethought for generative AI.

New emerging GenAIOps capabilities include:

Synthetic data management: extend data management with a new native generative AI capability. Generate synthetic training data through domain randomization to increase transfer learning capabilities. Declaratively define and generate edge cases to evaluate, validate, and certify model accuracy and robustness.

Embedding management: represent data samples of any modality as dense multi-dimensional embedding vectors; generate, store, and version embeddings in a vector database. Visualize embeddings for improvised exploration. Find relevant contextual information through vector similarity search for RAGs, data labeling, or data curation as a part of the active learning loop. For GenAIOps, using embeddings and vector databases replaces feature management and feature stores relevant to MLOps.

Agent/chain management: define complex multi-step application logic. Combine multiple foundation models and APIs together, and augment a foundation model with external memory and knowledge, following the RAG pattern. Debug, test, and trace chains with non-deterministic outputs or complex planning strategies, visualize, and inspect the execution flow of a multi-step chain in real-time and offline. Agent/chain management is valuable throughout the entire generative AI lifecycle as a key part of the inference pipeline. It serves as an extension of the workflow/pipeline management for MLOps.

Guardrails: intercept adversarial or unsupported inputs before sending them to a foundation model. Make sure that model outputs are accurate, relevant, safe, and secure. Maintain and check the state of the conversation and active context, detect intents, and decide actions while enforcing content policies. Guardrails build upon rule-based pre/post-processing of AI inputs/outputs covered under model management.

Prompt management: create, store, compare, optimize, and version prompts. Analyze inputs and outputs and manage test cases during prompt engineering. Create parameterized prompt templates, select optimal inference-time hyperparameters and system prompts serving as the starting point during the interaction of a user with an app; and adjust prompts for each foundation model. Prompt management, with its distinct capabilities, is a logical extension of experiment management for generative AI.

LLMOps

LLMOps is a subset of the broader GenAIOps paradigm, focused on operationalizing transformer-based networks for language use cases in production applications. Language is a foundational modality that can be combined with other modalities to guide AI system behavior, for example, NVIDIA Picasso is a multimodal system combining text and image modalities for visual content production.

In this case, text drives the control loop of an AI system with other data modalities and foundation models being used as plug-ins for specific tasks. The natural language interface expands the user and developer bases and decreases the AI adoption barrier. The set of operations encompassed under LLMOps includes prompt management, agent management, and RAGOps.

Driving generative AI adoption with RAGOps

RAG is a workflow designed to enhance the capabilities of general-purpose LLMs. Incorporating information from proprietary datasets during query time and grounding generated answers on facts guarantees factual correctness. While traditional models can be fine-tuned for tasks like sentiment analysis without needing external knowledge, RAG is tailored for tasks that benefit from accessing external knowledge sources, like question answering.

RAG integrates an information retrieval component with a text generator. This process consists of two steps:

Document retrieval and ingestion—the process of ingesting documents and chunking the text with an embedding model to convert them into vectors and store them in a vector database.

User query and response generation—a user query is converted to the embedding space at a query time along with the embedding model, which in turn is used to search against the vector database for the closest matching chunks and documents. The original user query and the top documents are fed into a customized generator LLM, which generates a final response and renders it back to the user.

It also offers the advantage of updating its knowledge without the need for comprehensive retraining. This approach ensures reliability in generated responses and addresses the issue of “hallucination” in outputs.

RAGOps is an extension of LLMOps. This involves managing documents and databases, both in the traditional sense, as well as in the vectorized formats, alongside embedding and retrieval models. RAGOps distills the complexities of generative AI app development into one pattern. Thus, it enables more developers to build new powerful applications and decreases the AI adoption barrier.

GenAIOps offers many business benefits

As researchers and developers master GenAIOps to expand beyond DevOps, DataOps, and ModelOps, there are many business benefits. These include the following.

Faster time-to-market: Automation and acceleration of end-to-end generative AI workflows lead to shorter AI product iteration cycles, making the organization more dynamic and adaptable to new challenges.

Higher yield and innovation: Simplifying the AI system development process and increasing the level of abstraction, enables GenAIOps more experiments, and higher enterprise application developer engagement, optimizing AI product releases.

Risk mitigation: Foundation models hold the potential to revolutionize industries but also run the risk of amplifying inherent biases or inaccuracies from their training data. The defects of one foundation model propagate to all downstream models and chains. GenAIOps ensures that there is a proactive stance on minimizing these defects and addressing ethical challenges head-on.

Streamlined collaboration: GenAIOps enables smooth handoffs across teams, from data engineering to research to product engineering inside one project, and facilitates artifacts and knowledge sharing across projects. It requires stringent operational rigor, standardization, and collaborative tooling to keep multiple teams in sync.

Lean operations: GenAIOps helps reduce waste through workload optimization, automation of routine tasks, and availability of specialized tools for every stage in the AI lifecycle. This leads to higher productivity and lower TCO (TCO).

Reproducibility: GenAIOps helps maintain a record of code, data, models, and configurations ensuring that a successful experiment run can be reproduced on-demand. This becomes especially critical for regulated industries, where reproducibility is no longer a feature but a hard requirement to be in business.

The transformational potential of generative AI

Incorporating GenAIOps into the organizational fabric is not just a technical upgrade. It is a strategic move with long-term positive effects for both customers and end users across the enterprise.

Enhancing user experiences: GenAIOps provides optimal performance of AI apps in production. Businesses can offer enhanced user experiences. Be it through chatbots, autonomous agents, content generators, or data analysis tools.

Unlocking new revenue streams: With tailored applications of generative AI, facilitated by GenAIOps, businesses can venture into previously uncharted territories, unlocking new revenue streams and diversifying their offerings.

Leading ethical standards: In a world where brand image is closely tied to ethical considerations, businesses that proactively address AI’s potential pitfalls, guided by GenAIOps, can emerge as industry leaders, setting benchmarks for others to follow.

The world of AI is dynamic, rapidly evolving, and brimming with potential. Foundation models, with their unparalleled capabilities in understanding and generating text, images, molecules, and music, are at the forefront of this revolution.

When examining the evolution of AI operations, from MLOps to GenAIOps, LLMOps, and RAGOps, businesses must be flexible, advance, and prioritize precision in operations. With a comprehensive understanding and strategic application of GenAIOps, organizations stand poised to shape the trajectory of the generative AI revolution.

How to get started

Try state-of-the-art generative AI models running on an optimized NVIDIA accelerated hardware/software stack from your browser using NVIDIA AI Foundry.

Get started with LLM development on NVIDIA NeMo, an end-to-end, cloud-native framework for building, customizing, and deploying generative AI models anywhere.

Or, begin your learning journey with NVIDIA training. Our expert-led courses and workshops provide learners with the knowledge and hands-on experience necessary to unlock the full potential of NVIDIA solutions. For generative AI and LLMs, check out our focused Gen AI/LLM learning path.