Modern AI applications increasingly rely on models that combine huge parameter counts with multi-million-token context windows. Whether it is AI agents... Modern AI applications increasingly...

More like this

NVIDIA cuQuantum Adds Dynamic Gradients, DMRG, and Simulation Speedup

NVIDIA cuQuantum is an SDK of optimized libraries and tools that accelerate quantum computing emulations at both the circuit and device level by orders of......

More like this

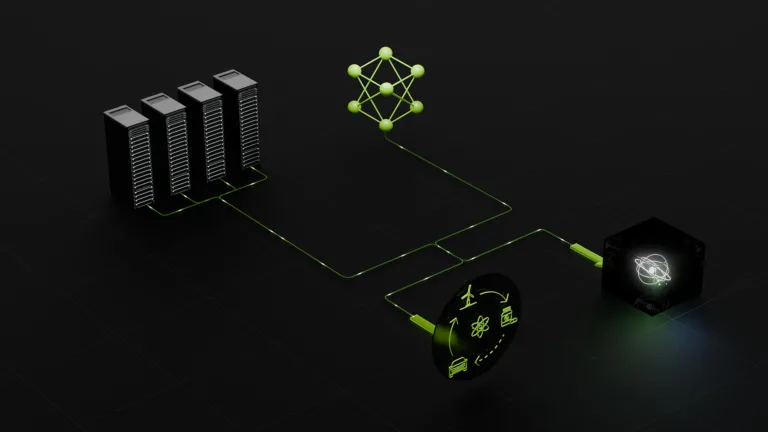

Turbocharging AI Factories with DPU-Accelerated Service Proxy for Kubernetes

As AI evolves to planning, research, and reasoning with agentic AI, workflows are becoming increasingly complex. To deploy agentic AI applications efficiently,... As AI evolves...

More like this

LLM Inference Benchmarking: Performance Tuning with TensorRT-LLM

This is the third post in the large language model latency-throughput benchmarking series, which aims to instruct developers on how to benchmark LLM inference... This...

More like this

RAPIDS Adds GPU Polars Streaming, a Unified GNN API, and Zero-Code ML Speedups

RAPIDS, a suite of NVIDIA CUDA-X libraries for Python data science, released version 25.06, introducing exciting new features. These include a Polars GPU... RAPIDS, a...