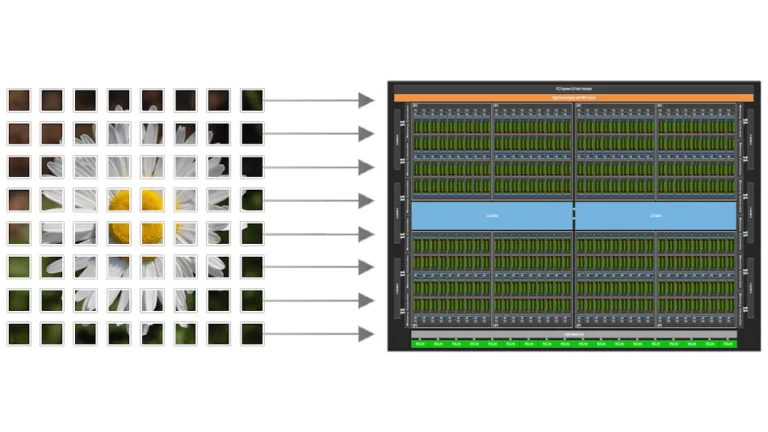

Model quantization is an effective method to reduce VRAM usage and improve inference performance on consumer devices such as NVIDIA GeForce RTX GPUs. By…

Model quantization is an effective method to reduce VRAM usage and improve inference performance on consumer devices such as NVIDIA GeForce RTX GPUs. By lowering computational and memory requirements while preserving model quality, quantization helps AI models run more efficiently in resource-constrained environments. This post walks through how to use NVIDIA Model Optimizer to quantize a…