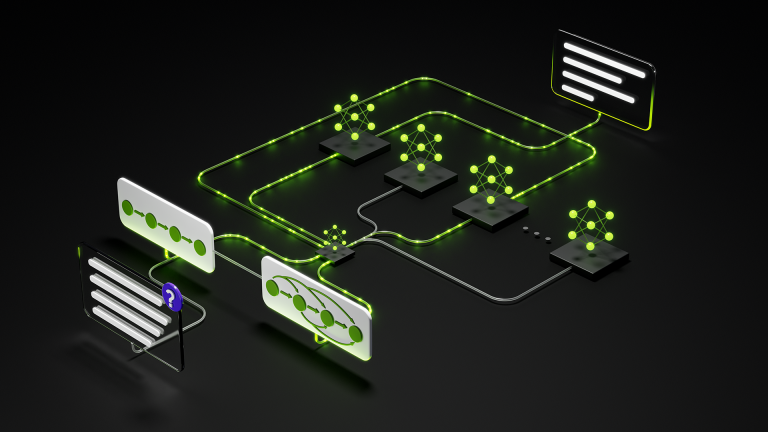

Knowledge distillation is an approach for transferring the knowledge of a much larger teacher model to a smaller student model, ideally yielding a compact,…

Knowledge distillation is an approach for transferring the knowledge of a much larger teacher model to a smaller student model, ideally yielding a compact, easily deployable student with comparable accuracy to the teacher. Knowledge distillation has gained popularity in pretraining settings, but there are fewer resources available for performing knowledge distillation during supervised fine-tuning…