Remember Cambridge Analytica? The British political consultancy operated from 2013-18 and had one mission: to hoover-up the data of Facebook users without their knowledge, then use this personal information to tailor political ads that would in theory sway their voting intentions (for an exorbitant price, of course). When the scandal broke, it was accused of meddling in everything from the UK’s Brexit referendum to Donald Trump’s 2015 Presidential campaign.

Well, AI is going to make that kind of stuff seem like pattycake. In a year that will see a presidential election in the US and a general election in the UK, the tech has now reached a stage where it can deepfake candidates, mimic their voices, craft political messages from prompts and personalise them to individual users, and this is barely scratching the surface. When you can watch Biden and Trump arguing over ranking the Xenoblade games, it’s inconceivable that the technology will stay out of these elections or, indeed, any other.

This is a serious problem. Online discourse is bad enough as it is, with partisans on either side willing to believe anything of the other, and misinformation already rife. The addition of outright fabricated content and AI targeting (among other things) to this mix is potentially explosive as well as disastrous. And OpenAI, the most high-profile company in the field, knows that it may be heading into choppy waters: but while it seems good enough at identifying the issues, it’s unclear whether it’ll be able to actually get a handle on them.

OpenAI says it’s all about “protecting the integrity of elections” and it wants “to make sure our technology is not used in a way that could undermine this process.” There’s some bumpf about all the positives AI brings, how unprecedented it is, yadda yadda yadda, then we get to the crux of the matter: “potential abuse”.

The company lists some of these problems as “misleading ‘deepfakes’, scaled influence operations, or chatbots impersonating candidates”. In terms of the first, it says DALL-E (its image-generating tech) will “decline requests that ask for image generation of real people, including candidates.” More worryingly, OpenAI says it doesn’t know enough about how its tools could be used for personal persuasion in this context: “Until we know more, we don’t allow people to build applications for political campaigning and lobbying.”

OK: so no fake imagery of the candidates, and no applications to do with campaigning. OpenAI won’t allow its software to be used to build chatbots that purport to be real people or institutions. The list goes on:

“We don’t allow applications that deter people from participation in democratic processes—for example, misrepresenting voting processes and qualifications (e.g., when, where, or who is eligible to vote) or that discourage voting (e.g., claiming a vote is meaningless).”

OpenAI goes on to detail its latest image provenance tools (which tag anything created by the latest iteration of DALL-E) and is testing a “provenance clarifier” which can actively detect any DALL-E imagery with “promising early results”. This tool is being rolled-out for testing by journalists, platforms and researchers soon.

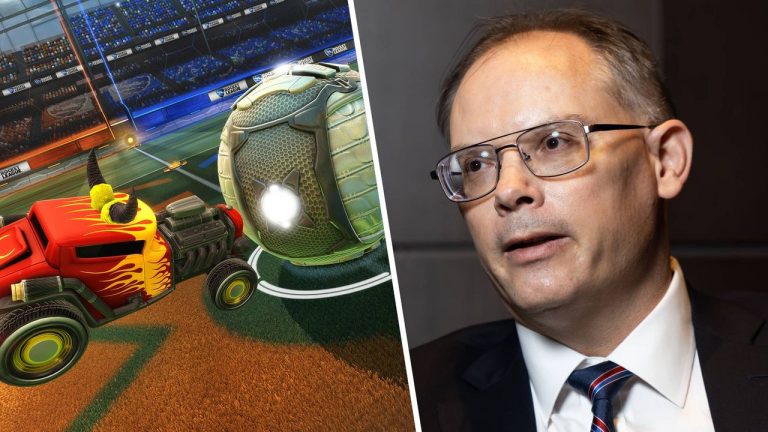

OpenAI CEO Sam Altman (Image credit: Getty Images – Anadolu Agency)

Welcome to the jungle

One of its reassurances, however, raises many more questions than answers. “ChatGPT is increasingly integrating with existing sources of information—for example, users will start to get access to real-time news reporting globally, including attribution and links. Transparency around the origin of information and balance in news sources can help voters better assess information and decide for themselves what they can trust.”

Hmm. This makes OpenAI’s tools seem like a glorified RSS feed, when the prospect of news reporting being filtered through AI seems rather more troubling than that. From their debut these tools have been used to produce various online content and indeed articles of a sort, but the prospect of it producing stuff around, say, the Israel-Palestine conflict or Trump’s latest conspiracy theory sounds pretty dystopian to me.

Honestly, it all feels like OpenAI knows something bad is coming… but it doesn’t yet know what the bad thing is, or how it’s going to deal with it, or even if it can. It’s all very well to say it won’t produce deepfakes of Joe Biden, but maybe those aren’t going to be the problem. I suspect the biggest issue will be how its tools are used to amplify and disseminate certain stories and talking points and micro-target individuals: which OpenAI is obviously aware of, though its solutions are unproven.

Reading between the lines here, we’re driving into the dark at high speed with no headlights. OpenAI talks about stuff like directing people to CanIVote.org when asked voting questions, but things like this feel like very small beer next to the potential enormity of the problem. Most stark of all is that it expects “lessons from this work will inform our approach in other countries and regions”, which roughly translates as ‘guess we’ll see what evil shit people get up to and fix it after the fact.’

Perhaps that’s being too harsh. But the typical tech language of “learnings” and working with partners doesn’t quite feel like it deals with the biggest issue here: AI is a tool with the potential to interfere in and even influence major democratic elections. Bad actors will inevitably use it to this end, and neither we nor OpenAI know what form that’s going to take, or what it will look like. The Washington Post’s famous masthead declares that “Democracy dies in darkness”. But maybe that’s wrong. Maybe what will do in democracy is chaos, lies, and so much bunk information that voters either become cult maniacs or just stay home.