CUDA 13.2 arrives with a major update: NVIDIA CUDA Tile is now supported on devices of compute capability 8.X architectures (NVIDIA Ampere and NVIDIA Ada),...

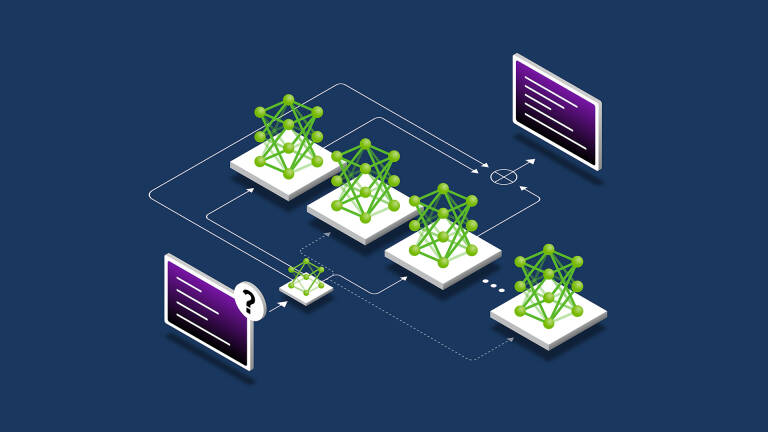

Implementing Falcon-H1 Hybrid Architecture in NVIDIA Megatron Core

In the rapidly evolving landscape of large language model (LLM) development, NVIDIA Megatron Core has emerged as the foundational framework for training massive... In the...

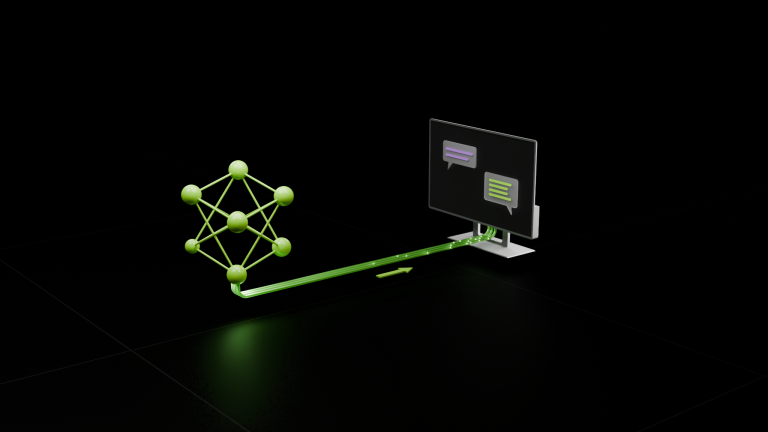

Enhancing Distributed Inference Performance with the NVIDIA Inference Transfer Library

Deploying large language models (LLMs) requires large-scale distributed inference, which spreads model computation and request handling across many GPUs and... Deploying large language models (LLMs)...

Removing the Guesswork from Disaggregated Serving

Deploying and optimizing large language models (LLMs) for high-performance, cost-effective serving can be an overwhelming engineering problem. The ideal... Deploying and optimizing large language models...

NVIDIA Blackwell Sets STAC-AI Record for LLM Inference in Finance

Large language models (LLMs) are revolutionizing the financial trading landscape by enabling sophisticated analysis of vast amounts of unstructured data to... Large language models (LLMs)...