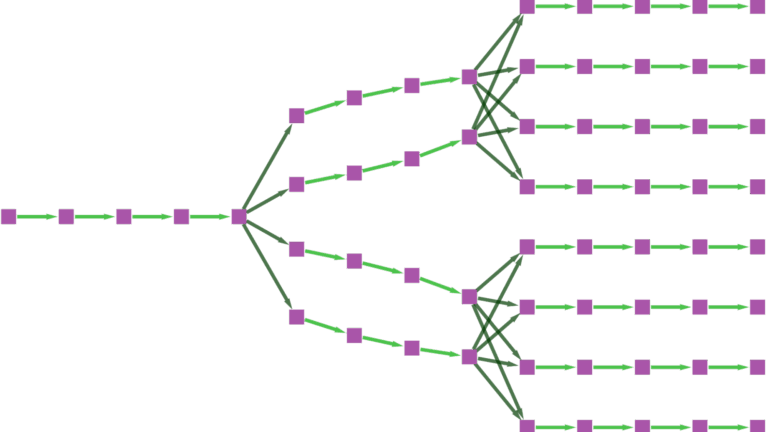

For machine learning engineers deploying LLMs at scale, the equation is familiar and unforgiving: as context length increases, attention computation costs... For machine learning engineers...

Advanced Large-Scale Quantum Simulation Techniques in cuQuantum SDK v25.11

Simulating large-scale quantum computers has become more difficult as the quality of quantum processing units (QPUs) improves. Validating the results is key to... Simulating large-scale...

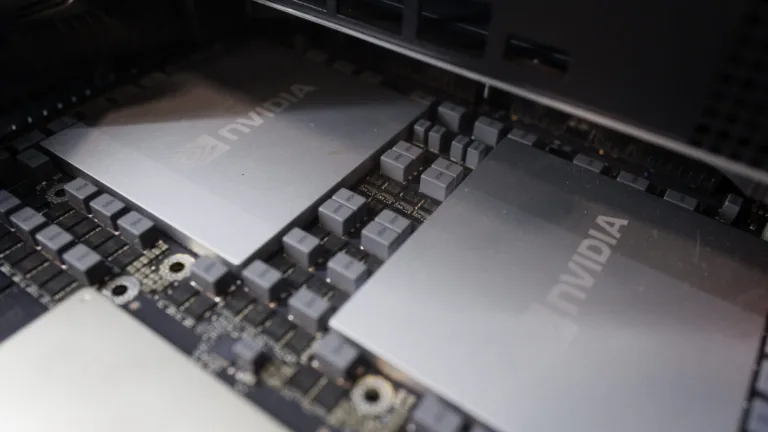

Boost GPU Memory Performance with No Code Changes Using NVIDIA CUDA MPS

NVIDIA CUDA developers have access to a wide range of tools and libraries that simplify development and deployment, enabling users to focus on the “what”......

AI Factories, Physical AI, and Advances in Models, Agents, and Infrastructure That Shaped 2025

2025 was another milestone year for developers and researchers working with NVIDIA technologies. Progress in data center power and compute design, AI... 2025 was another...

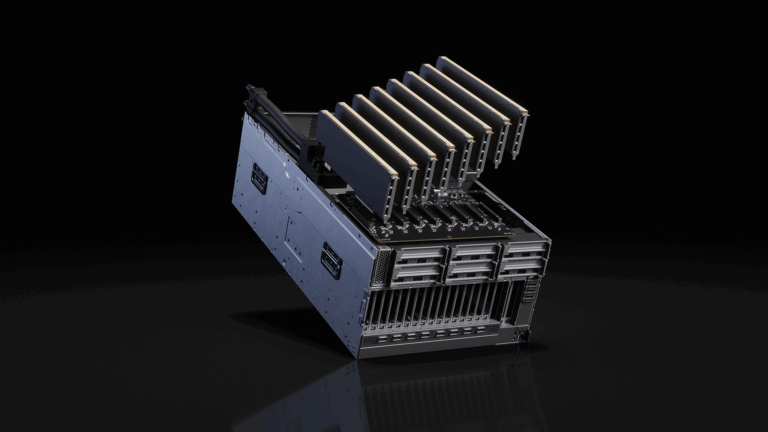

Delivering Flexible Performance for Future-Ready Data Centers with NVIDIA MGX

The AI boom reshaping the computing landscape is poised to scale even faster in 2026. As breakthroughs in model capability and computing power drive rapid......