While consumer AI offers powerful capabilities, workplace tools often suffer from disjointed data and limited context. Built with LangChain, the NVIDIA AI-Q... While consumer AI...

Greyhawkery Comics: Cultists #32

Welcome back avid readers! Gary Con 2026 is upon us, and by the time you are reading this I'll be in the frozen hinterlands of...

Greyhawkery Comics: Under #32

Beware readers, and don't despair! You have made it this far in my short story, Under, there is only six more pages to go. When...

Building the AI Grid with NVIDIA: Orchestrating Intelligence Everywhere

AI-native services are exposing a new bottleneck in AI infrastructure: As millions of users, agents, and devices demand access to intelligence, the challenge is... AI-native...

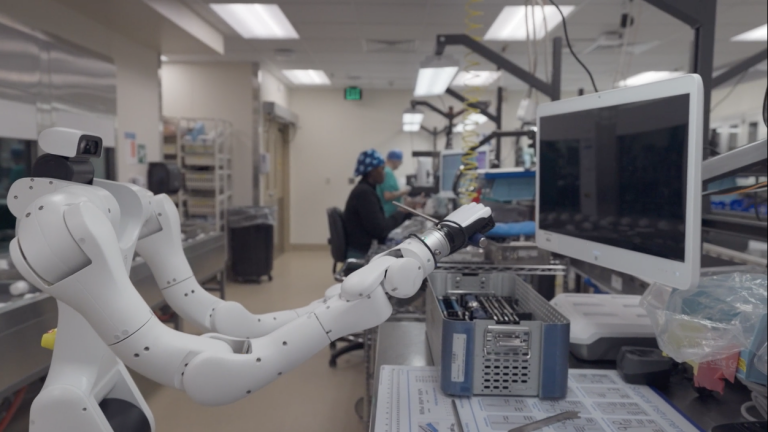

Using Simulation to Build Robotic Systems for Hospital Automation

Healthcare faces a structural demand–capacity crisis: a projected global shortfall of ~10 million clinicians by 2030, billions of diagnostic exams annually... Source