Higher-order optimization algorithms such as Shampoo have been effectively applied in neural network training for at least a decade. These methods have achieved... Higher-order optimization...

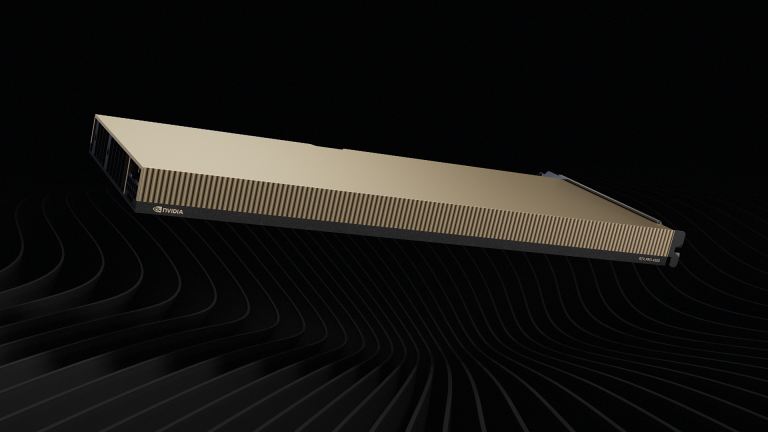

Scaling the AI-Ready Data Center with NVIDIA RTX PRO 4500 Blackwell Server Edition and NVIDIA vGPU 20

AI integration is redefining mainstream enterprise applications, from productivity software like Microsoft Office to more complex design and engineering tools.... AI integration is redefining mainstream...

Greyhawkery Comics: Under #35

Welcome back to another installment of my short story Under. There are only a few pages left in the tale so let's get into it....

Run High-Throughput Reinforcement Learning Training with End-to-End FP8 Precision

As LLMs transition from simple text generation to complex reasoning, reinforcement learning (RL) plays a central role. Algorithms like Group Relative Policy... As LLMs transition...

Maximizing Memory Efficiency to Run Bigger Models on NVIDIA Jetson

The boom in open source generative AI models is pushing beyond data centers into machines operating in the physical world. Developers are eager to deploy...