The boom in open source generative AI models is pushing beyond data centers into machines operating in the physical world. Developers are eager to deploy these…

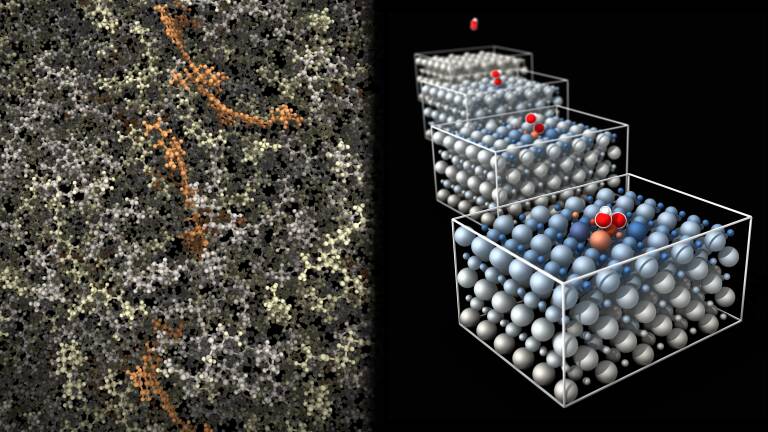

The boom in open source generative AI models is pushing beyond data centers into machines operating in the physical world. Developers are eager to deploy these models at the edge, enabling physical AI agents and autonomous robots to automate heavy-duty tasks. A key challenge is efficiently running multi-billion-parameter models on edge devices with limited memory. With ongoing constraints on…