Okay, that’s some bombastic headlining right there, but when one of the people behind the success of Nvidia’s DLSS feature reckons a new AI innovation in gaming is “gonna blow your mind” I’m prepared to listen. The Nvidia GPU Technology Conference starts today, with Jen-Hsun Huang hitting the stage for the opening keynote, and the company’s GeForce social channels have been promising that we’ll get a look at “the future of real-time rendering” during the presentation.

Given that’s coming via its GeForce gaming channels it’s not unreasonable to think it’s specifically referencing real-time rendering in PC games. Which is not something we’d normally expect to be coming out of GTC, which is traditionally very focused around general purpose GPU (GPGPU) use, and obviously most recently around AI.

Catch the future of real-time rendering in Jensen’s keynote tomorrow👀 https://t.co/KFv1JoTsDuMarch 15, 2026

But combine those tweets with something Bryan Catanzaro, VP of Applied Deep Learning Research—and someone who’s worked on DLSS for the past ten years—said at GDC last week, and you can colour me excited to hear what’s going on.

During a GDC panel discussion titled: AI Trends of Today and Opportunities for Tomorrow: Ask Me Anything the question was asked, “What has surprised you in terms of innovation with AI in games in the past one to three months?”

Having spoken a little about DLSS and Frame Generation in response, he goes on to talk about generative AI rendering being the “most important update to the way that graphics are rendered in at least the last decade.”

“Yeah, but to your question of, what have you seen in the past month?” He continues. “I can’t tell you exactly what I’ve seen, but you’ll find out very soon, and it’s going to blow your mind.”

So, er… join me as we have our minds collectively blown, I guess.

Live blog

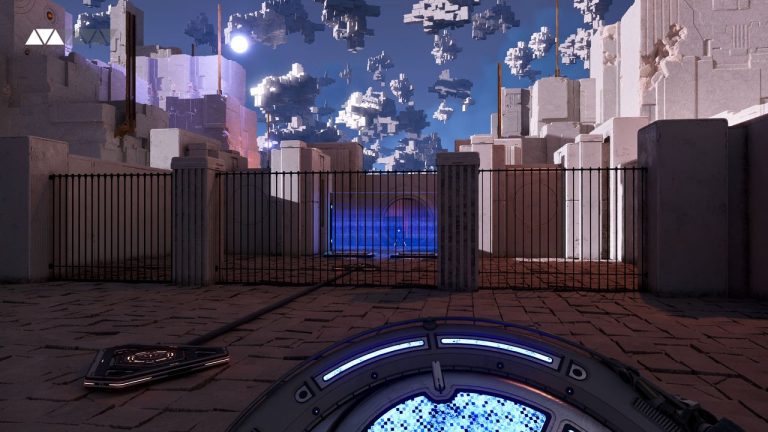

(Image credit: Nvidia/CD Projekt Red)

So, what could it be about? An update and some demo-ing of neural rendering in action in an actual game would be my first guess.

Introduced at CES 2025, alongside the RTX Blackwell series of GPUs, neural rendering—and neural shaders—promise big things. From enhanced AI compression techniques to deliver “next-generation asset generation” as a potental VRAM crutch (useful at a time when memory be expensive) to RTX MEGA GEOMETRY which is going to give Witcher 4 lots of pretty trees, sticking AI into the graphics pipeline has the potential to hugely up the fidelity of PC games.

Our Nick had some good ideas about this earlier:

“How about DLSS Ray Full Construction, an AI system that doesn’t just denoise a ray-traced scene but actually uses machine learning to generate thousands of additional ‘fake’ rays?”

It would certainly make ray/path tracing a little lighter on GPUs if they only have to actually trace a couple real rays in the scene, rather than a couple per pixel.

Given how painfully expensive memory is for everything these days, however, I kinda think Nick ought to get on and patent RTX Ultra Memory.

“I’ve got it! Huang will hop onto the stage with a new GeForce RTX graphics card. It’ll have 10,000 CUDA cores and 4 GB of VRAM, but it will be the first to use RTX Ultra Memory, an AI-powered system that neurally compresses everything automatically and does it so quickly and so well that it effectively quadruples the VRAM and its bandwidth on your graphics card. Yours for just $1,299.”