One of the many things AI is doing is make us confront and attempt to clarify many of our concepts: What does it mean to code? What does it mean to be conscious? Where is the boundary between inspiration and copying? On this last point, a couple of software and programming researchers have recently demonstrated that it’s technically possible and seemingly legal, based on our current understanding of copyright and related concepts, to use AI to scrape and reforge entire suites of open-source software to essentially clone them and make them proprietary.

In their presentation (via The PrimeTime), Dylan Ayrey, founder of open-source software company Truffle Security, and Mike Nolan, a software architect at the UN Development Program, explain that if you pay a small fee you can use their malus.sh AI service to recreate any open-source project. The result, according to the website, is “legally distinct code with corporate-friendly licensing. No attribution. No copyleft. No problems.”

It’s all couched in terms of the history of copyright, which they go into quite some detail covering. But the most relevant fact is that ever since the US Supreme Court Baker v Selden case, copyright has protected expression rather than ideas. This has ultimately led to the existence of clean-room design, a means of copying something’s design—ie, its idea—without copying its expression, thereby circumventing copyright.

The basic idea is you create a detailed description or set of specifications for a creation, whether that’s a book, a piece of software, or something else, and then you have someone create the new book or software just from that, without looking at the original source. Then, in theory, you’ve copied the original source without technically breaking any copyright.

When it comes to software, some of the original and most infamous cases of clean-room design came from Phoenix Technologies, which created BIOS specifications by creating specifications from others and giving those to a new developer who would create them fresh.

Ayrey and Nolan’s presentation shows how AI can do similar but orders of magnitude quicker and ‘easier’—setting aside, of course, the original time and energy cost of the original AI model’s training. Part of the argument for clean-room design being reasonable is that often tons of work does go into understanding the original creation, creating a specification from it, and then building a new one up from scratch.

But can we say the same of AI clean-rooming, when all it takes is a couple of AI bots, some prompting, and a miniscule amount of time? The clear answer, in my mind at least, is no.

And that’s not even considering the possibility the AI model could have been trained, as part of its dataset, on the same code it’s then tasked with recreating. Can an AI ever siphon itself off from that and rely just on the new specification you give it?

Given there are such problems, you might be wondering why the researchers made the website. It seems clear to me, from the presentation, that as well as being a little tongue-in-cheek, it’s also meant to highlight the problem that AI is posing for open-source software.

There is, however, also an argument to be made for copying open-source software and making it proprietary. For one, companies and users relying on open-source software can mean opening yourself up to vulnerabilities. For instance, the researchers explain that the widely used open-source Log4j framework had a vulnerability called Log4Shell that had tons of companies around the world scrambling. Even Minecraft Java Edition required immediate patching.

One of the big problems with such problems is that you can be left at the mercy of open-source developers to fix them, which sometimes might just be one person. And open-source maintainers, given they’re working for free, might not have as big of an incentive to fix things as a proprietary coder. And on that open-source developer’s side, they can receive a lot of pressure from companies that rely on their software to make changes, despite them maintaining the project for free.

The researchers explain other problems with open-source software, such as to do with licensing costs and complications, but the ultimate problem the Malus project serves to highlight is that of AI and copyright. As one of the duo explains in a mock back-and-forth: “If we don’t do it, someone else will.”

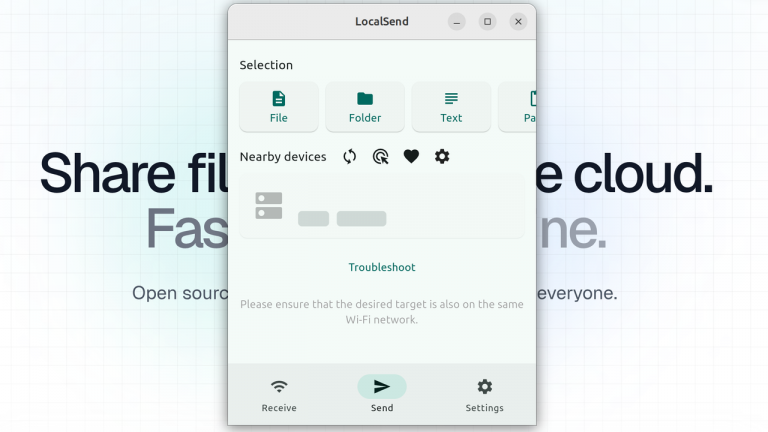

(Image credit: Malus)

Such a statement can never be a moral justification, and in fact seems like cognitive distortion 101. But it might nevertheless be true, and does highlight the issue that we should be focusing on: How can copyright deal with AI clean-rooming, and how can open-source software remain if it can be quickly, easily, and legally copied and made proprietary?

Near the end, one of the researchers asks: “How are you going to stop me? What tools, what system could you ever possibly use to stop all of this?”

And isn’t that just the question?

Another pertinent quote, from an AI-generated video that closes out their presentation:

“Consider a turkey that is fed every day. Every single feeding will firm up the bird’s belief that it is the general rule of life to be fed every day by friendly members of the human race looking out for its best interests. As a politician would say on the afternoon of the Wednesday before Thanksgiving, something unexpected will happen to the turkey. It will incur a revision of belief.”

I can only assume the “revision of belief”—ie, the slaughter—the researchers are hinting at is where all our open-source software is cloned and made proprietary by AI. The difference being, of course, that these codebases weren’t knowingly fattened for the slaughter.

Regardless, it does seem a pertinent warning. In which case, what are we going to do about it? Will open-source code incur a “revision of belief”, or can something be done about it before it’s too late?