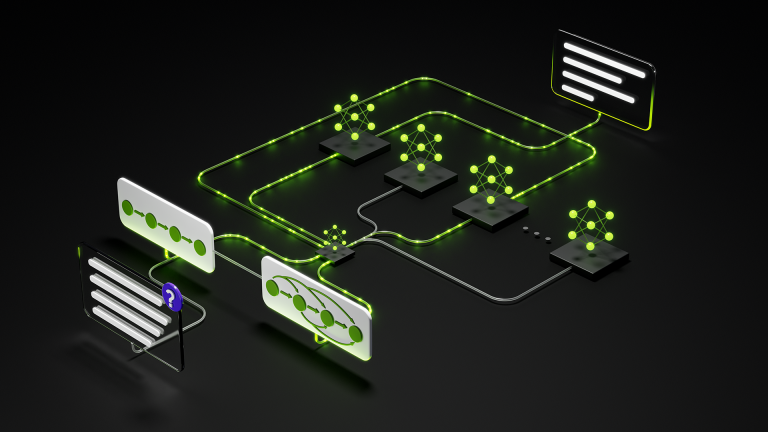

Deploying and optimizing large language models (LLMs) for high-performance, cost-effective serving can be an overwhelming engineering problem. The ideal…

Deploying and optimizing large language models (LLMs) for high-performance, cost-effective serving can be an overwhelming engineering problem. The ideal configuration for any given workload (such as hardware, parallelism, and prefill/decode split) resides in a massive, multi-dimensional search space that is impossible to explore manually or through exhaustive testing. AIConfigurator…