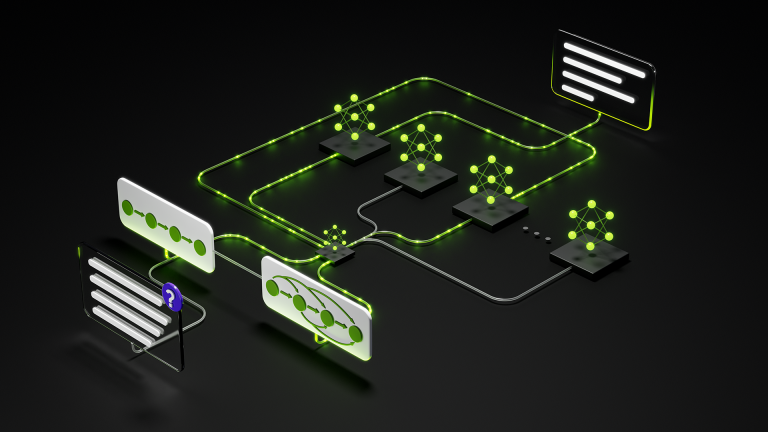

In this post, we dive into one of the most critical workloads in modern AI: Flash Attention, where you’ll learn: How to implement Flash Attention using NVIDIA…

In this post, we dive into one of the most critical workloads in modern AI: Flash Attention, where you’ll learn: Environment requirements: See the quickstart doc for more information on installing cuTile Python. The attention mechanism is the computational heart of transformer models. Given a sequence of tokens, attention enables each token to “look at” every other…