As AI models extend their capabilities to solve more sophisticated challenges, a new scaling law known as test-time scaling or inference-time scaling is... As AI...

More like this

New research says ChatGPT likely consumes ’10 times less’ energy than we initially thought, making it about the same as Google search

It's easy to slate AI in all its manifestations—trust me, I should know, I do so often enough—but some recent research from Epoch AI (via...

More like this

How to unlock the Barrow-Dyad exotic in Destiny 2

We knew Barrow-Dyad was coming, but even so, this is a surprise. Bungie revealed the new exotic Strand SMG in a preview stream before last...

More like this

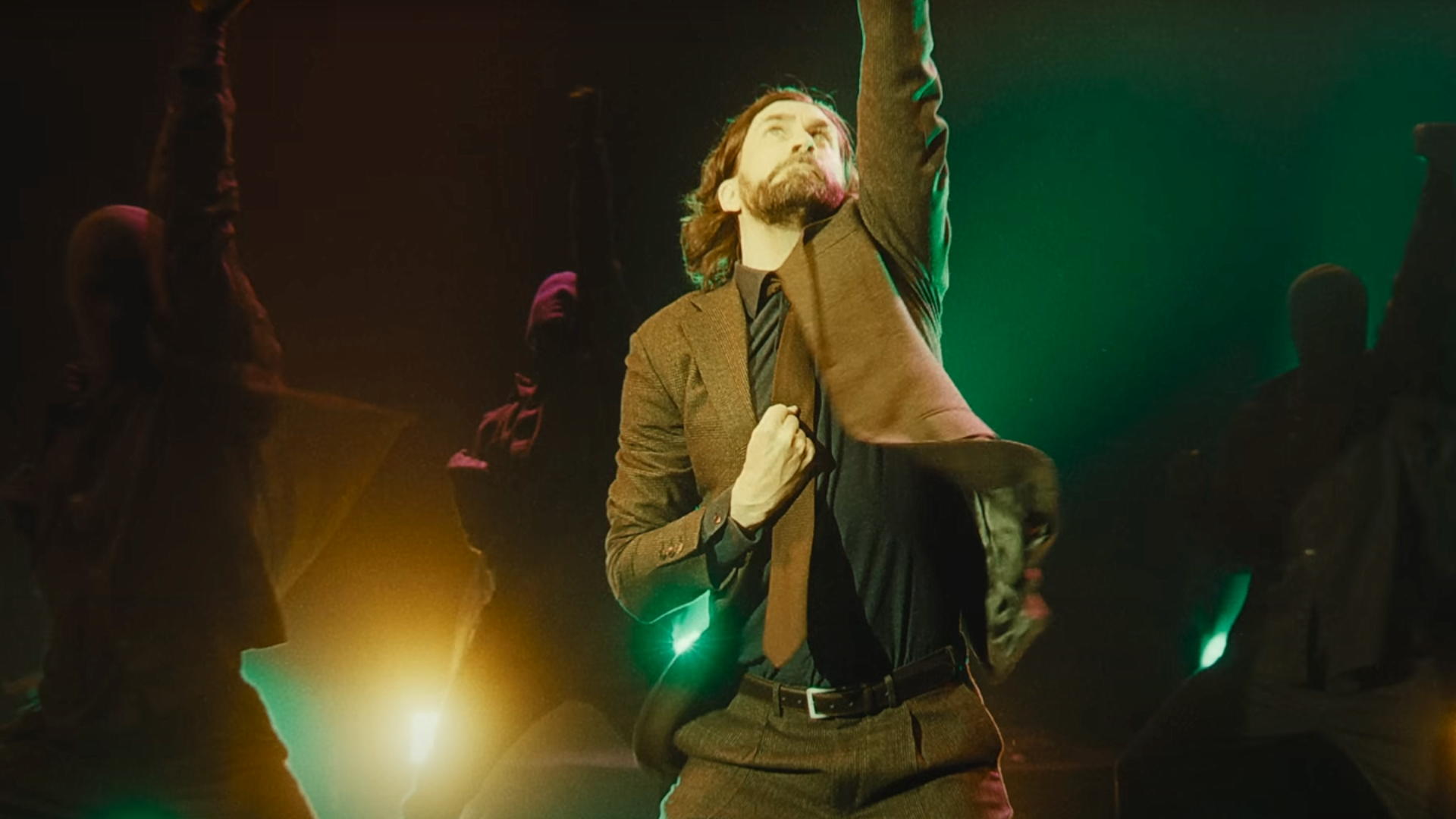

After more than a year and 2 million sales, Alan Wake 2 is finally turning a profit for Remedy

Alan Wake 2, Remedy's gorgeous oddity, is finally making the developer some cash, over a year after its launch. In a financial report published today,...

More like this

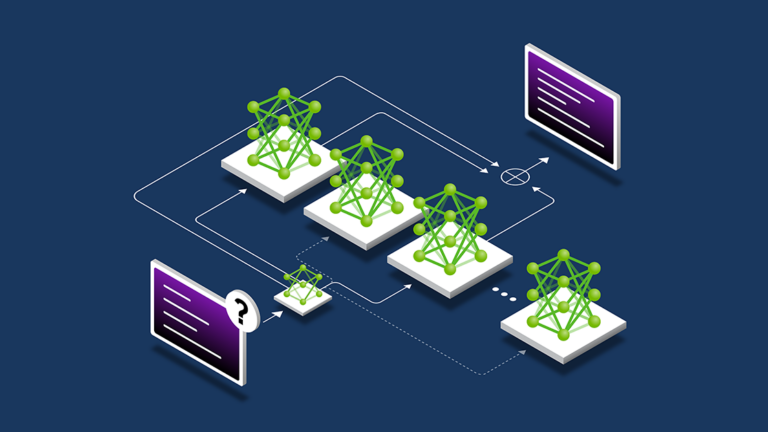

LLM Model Pruning and Knowledge Distillation with NVIDIA NeMo Framework

Model pruning and knowledge distillation are powerful cost-effective strategies for obtaining smaller language models from an initial larger sibling. ... Model pruning and knowledge distillation are...